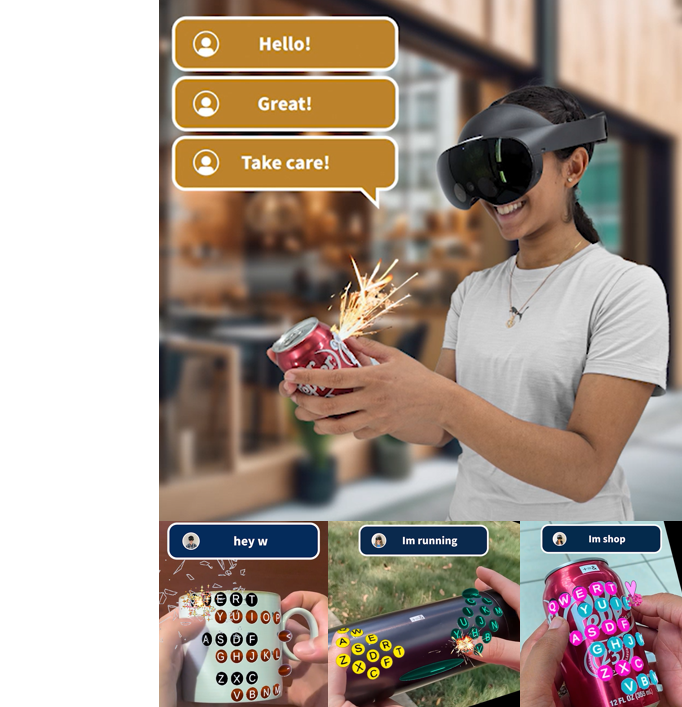

Wearable & Tangible Interfaces

Designing low-barrier, body-centric systems that leverage the human form for natural interaction in both physical and virtual spaces.

LABORATORY OF

Next-generation Experience and Technology

Our research is grounded in Embodied Interaction, emphasizing seamless integration between the human body and digital technologies. We envision interaction paradigms where the body becomes the primary medium of input and output, enabling intuitive, expressive, and multisensory experiences.

Designing low-barrier, body-centric systems that leverage the human form for natural interaction in both physical and virtual spaces.

Integrating tactile, thermal, and auditory cues to enrich immersion and presence across XR environments.

Creating adaptive interfaces that respond dynamically to user context, environment, and task demands.

Building experimental platforms to rapidly test new concepts with rigorous user studies and metrics.

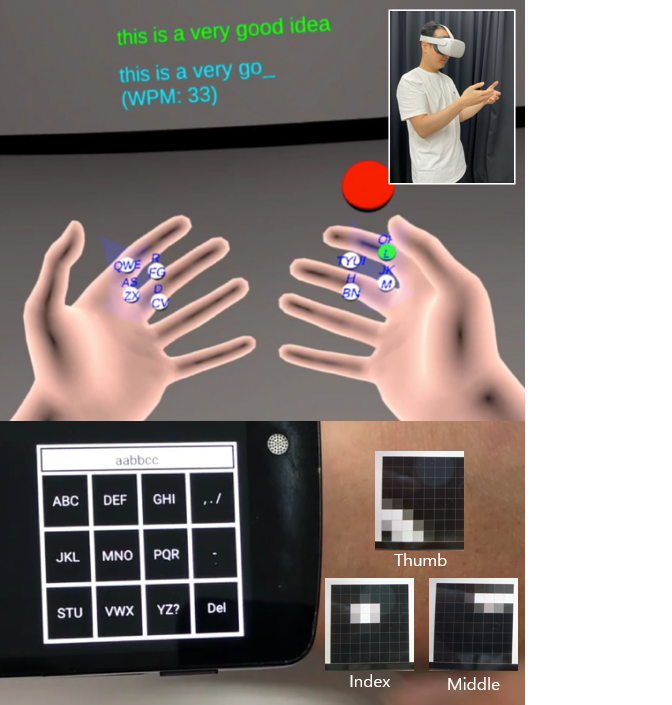

We envision a future where input systems seamlessly capture the full bandwidth of human expression—from fine-grained finger motions to whole-body gestures—bridging the gap between physical and virtual worlds. Our research investigates:

Leveraging computer vision, wearable sensors, and hybrid sensing techniques to achieve accurate, low-latency motion capture in 3D space.

Combining gestures with voice, gaze, haptics, and context-aware signals for richer user input channels.

Developing machine learning models that adapt input techniques to individual users, tasks, and environments.

Building experimental platforms to systematically evaluate input bandwidth, accuracy, and user experience across diverse application domains.

We envision a future where the boundary between real and virtual worlds dissolves, enabling users to experience seamless and lifelike interactions that engage multiple senses simultaneously. To achieve this, our research explores:

Designing interfaces that combine haptic, auditory, visual, and thermal cues to replicate real-world sensations and enhance perceptual realism.

Developing interaction paradigms that unify physical robots, tangible interfaces, and virtual agents within a single immersive ecosystem.

Creating user-centered evaluation frameworks to measure presence, realism, and cognitive engagement in both real and virtual contexts.

Leveraging AI-driven models to dynamically adapt sensory feedback based on user states, environmental factors, and interaction intent.